What is Database Architecture - Definitions and Terms

Database architecture is the high-level design that defines how data is organized, stored, processed, accessed, and secured across a system. It outlines key components, storage structures, query processors, access interfaces, and operational controls, and how they interact to ensure efficiency, reliability, and scalability. A clear architecture provides a blueprint for developers and administrators to build, maintain, and expand the database in line with the application’s business logic.

How an Application Interacts with Database Content

Accessing Data Efficiently

When an application interacts with a DB, it accesses objects using primary and foreign keys. A well-designed database architecture enables the application to utilize cascading relationships, optimize queries, and retrieve data in an efficient and structured manner.

Optimizing Performance

Minimizing the load generated by each query allows a higher number of concurrent operations. For example, it also makes possible hot backups, backups that run without interrupting the database storage engine, while maintaining overall performance and stability.

Core Pieces of Database Architecture

Storage Layer

This layer includes the physical and logical structures where data resides, such as tables, files, indexes, and partitions. It defines how data is organized on disk, optimized for fast retrieval, and managed across storage devices to ensure durability and efficiency.

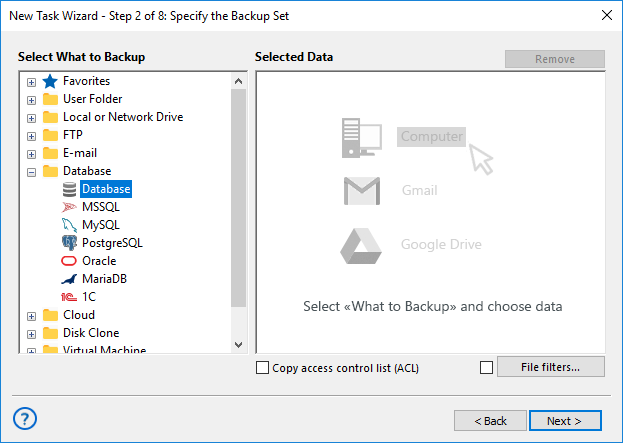

Computer Layer

Responsible for processing queries and executing operations, this layer encompasses query planners, executors, and caching mechanisms. It optimizes performance by managing computation resources, parallel execution, and in-memory operations.

Access Layer

The access layer handles connectivity between applications and the DB. It includes drivers, APIs, and connection pools, ensuring secure, efficient, and reliable communication while managing concurrency and session control.

Integration Layer

This layer facilitates data movement and synchronization across systems. It covers ETL/ELT pipelines, messaging frameworks, change data capture (CDC), and replication, enabling seamless integration between heterogeneous applications and databases.

Operations Layer

Focused on reliability and maintainability, this layer encompasses security controls, backup and restore processes, monitoring tools, and high availability/disaster recovery (HA/DR) strategies, ensuring data integrity, continuous operation, and quick recovery from failures.

Note: The Shared-Nothing Architecture (SNA) is a distributed system design where each node operates independently, with its own CPU, memory, and storage. This approach eliminates resource contention, enhances scalability, and increases fault tolerance, making it ideal for large, high-performance databases.

Common Styles of Database Architecture

OLTP vs OLAP

Online Transaction Processing (OLTP) systems are optimized for handling high volumes of short, transactional queries, such as order processing or banking operations. Online Analytical Processing (OLAP) systems, in contrast, are designed for complex, large-scale analytical queries, reporting, and data mining.

Single-Node vs Distributed

Single-node databases run entirely on one server, providing simplicity but limited scalability. Distributed architectures use sharding, replication, and consensus algorithms to spread data across multiple nodes, ensuring fault tolerance, high availability, and horizontal scalability for large datasets.

Warehouse, Data Lake, Lakehouse

Data warehouses store curated, structured schemas optimized for reporting and analytics. Data lakes store raw, unstructured, or semi-structured files, allowing flexible exploration. Lakehouse architectures combine both approaches, offering the structure of warehouses with the flexibility and scale of lakes.

Large-Scale MPP/Columnar

Massively Parallel Processing (MPP) systems split large queries across multiple nodes to accelerate computation. Columnar storage formats organize data by columns rather than rows, significantly improving performance for aggregation-heavy analytical workloads on large-scale datasets.

Event-Driven, CQRS, Microservices

Modern architectures often rely on event-driven streaming and microservices patterns, enabling decoupled, real-time data processing. The CQRS (Command Query Responsibility Segregation) pattern separates read and write operations to optimize performance, scalability, and maintainability in complex systems.

Deployment Models and High Availability Patterns

Deployment Models

Databases can be deployed in several ways. On-premises deployments give full control over hardware, network, and security. Managed cloud services offer scalable, fully maintained environments with minimal operational overhead. Serverless databases automatically scale with demand, ideal for variable workloads and reduced administrative management.

High Availability Patterns

High availability ensures continuous operation and fault tolerance. Active-passive setups use a standby replica if the primary fails. Active-active configurations process requests on multiple nodes for load balancing and redundancy. Multi-region deployments replicate data across locations to reduce latency and protect against regional failures.

Database Design Layers

Understanding databases requires more than just knowing how to store information—it also involves grasping how a system is designed, how data is organized, and how it is structured internally. These concepts determine how efficiently data can be stored, accessed, and processed, and provide the foundation for building reliable, scalable, and maintainable database systems.

Architecture defines the high-level blueprint of a DB system, specifying its core components, their roles, and how they interact to handle storage, processing, and access. It sets the framework for developers and administrators to build, maintain, and scale the database efficiently.

Schema describes the logical organization of the data within the system. It defines tables, fields, indexes, constraints, and relationships between entities, providing a structured framework for storing and accessing information in accordance with business rules.

Data Structure refers to the internal format and organization of individual data elements, such as arrays, lists, trees, or hash tables. It determines how data is physically represented and manipulated in memory or on storage devices, enabling efficient operations at the granular level.

Together, these layers—architecture, schema, and data structures—form a complete view of how a database is designed, organized, and operated to deliver reliable and efficient data management.

Levels of Database Architecture

DB design often relies on abstract models that help separate concerns between different levels of a system. Two widely used approaches are the three-schema architecture and the three-tier architecture, each providing a framework to understand, organize, and manage data in complex systems.

Three-Schema Architecture defines three levels of abstraction for a database:

External Schema

This is the view of the database as seen by individual users or applications. It determines what data a particular user can access, often tailoring tables, columns, or subsets of data to meet their needs.

Conceptual Schema

This level represents the logical structure of the entire database, independent of any user or application. It organizes entities, relationships, constraints, and views, providing a unified representation of the system’s data.

Internal Schema

This is the physical storage level, describing how data is actually stored on disk or other media, including indexes, file structures, partitions, and access paths. It ensures efficiency, durability, and performance.

Three-Tier Architecture, while related, focuses on how the database interacts with applications and the underlying system, usually described as:

Conceptual Tier

This corresponds closely to the conceptual schema, defining the logical organization of data and the rules governing relationships between entities.

Logical Tier

Often overlaps with both conceptual and external schemas, implementing logical data models that applications use to query, manipulate, and validate data.

Physical Tier

Maps directly to the internal schema, representing how data is physically stored, optimized, and accessed.

Understanding these layers is easier if you think about abstract structures and organizations—how we look at systems and processes in terms of what users see, how operations are managed, and how data flows internally. This approach is conceptually similar to client-server architecture, where clients interact with an application layer while the server handles data management, processing, and storage behind the scenes.

In practice, DB systems often blur the lines between these levels. For example, SQL syntax frequently mixes notions from external and conceptual schemas. Queries and table definitions can combine user-facing views with underlying logical models.

- DBMSs allow defining what databases and tables a particular user sees, roughly corresponding to external and conceptual schemas.

- SQL views for end users versus DBA logical views mix non-internal and internal perspectives, showing overlap between abstraction layers.

- SQL Data Definition Language (DDL) does not strictly separate user, DBA, and physical system aspects, so the system’s logical and physical layers are often intertwined.

This flexibility means that while three-schema and three-tier architectures provide a useful framework, in practice the boundaries between user views, logical models, and physical storage are adaptable, allowing database systems to serve multiple needs efficiently.

By understanding three-schema and three-tier architectures, developers, DBAs, and system designers can better organize data, manage access, and optimize performance while keeping clear mental models of the system at different levels of abstraction.

Learn more:

- MyISAM

"MyISAM is a storage engine employed by MySQL database that was used by default prior to MySQL version 5.5 (released in December, 2009). It is based on…" - What is Database Dump?

"Database dump is a text file containing a set of SQL statements that need to be run on an SQL server to create a database with the entire internal structure…" - Database Replication

"Database replication is a process of synchronization in which data added to one database is automatically copied to the other. Replication should not be…"